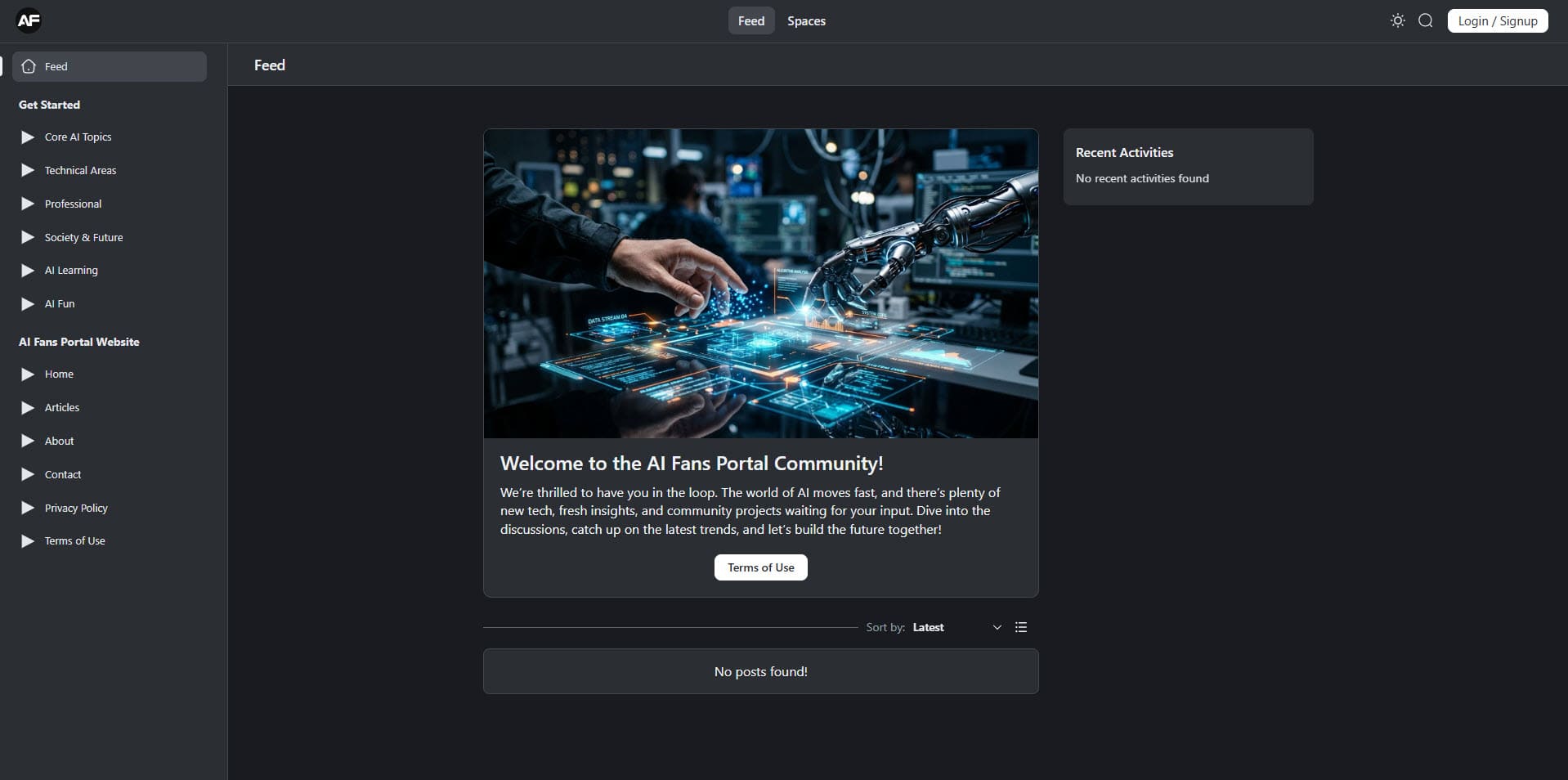

Beyond the Hype: The New Frontier of Neural Reasoning and AI Research

The landscape of Artificial Intelligence research is currently undergoing a seismic shift. For the past decade, the industry was fueled by the “scaling hypothesis”—the idea that simply adding more data and more compute power to large language models (LLMs) would eventually birth intelligence. While this approach gave us the wonder of generative art and fluent chatbots, we have hit a plateau. Now, “more” no longer equates to “smarter.”

Today, the brightest minds in AI research are pivoting. We are moving away from mere pattern matching and toward true neural reasoning. This transition isn’t just a technical upgrade; it’s a fundamental reimagining of how machines process logic. Therefore, it changes how machines solve problems and interact with the physical world.

The Architecture of Thought: Moving Past Next-Token Prediction

To understand where AI research is going, we must acknowledge where it has been. Standard transformer models operate on a “System 1” thinking process—a term coined by psychologist Daniel Kahneman. This is fast, instinctive, and emotional. When you ask a traditional LLM a question, it predicts the next most likely word based on statistical probability. It isn’t “thinking”; it’s calculating.

System 2 Thinking: The Rise of Inference-Time Compute

The most significant breakthrough in recent research is the implementation of “System 2” thinking—slow, deliberate, and logical. Instead of blurting out the first statistical answer, new models are being designed to use “inference-time compute.” This means the model “thinks” before it speaks. It weighs different paths of logic and corrects its own errors before presenting a final result.

This is the technology behind “Chain-of-Thought” (CoT) prompting and specialized reasoning models. By allowing a model to allocate more processing power during the generation phase rather than just the training phase, researchers have found improvement. Models can now solve complex mathematical and coding problems that previously stumped even the largest trillion-parameter networks.

The Data Scarcity Problem and Synthetic Solutions

We are running out of the internet. Research suggests that by 2026, AI labs will have exhausted the high-quality human-generated text available on the public web. This “data wall” is forcing researchers to innovate in how models learn.

The Role of Synthetic Data in Model Evolution

If we cannot find more human data, we must create it. However, this carries the risk of “model collapse”—a phenomenon where AI trained on AI-generated content becomes progressively more “inbred” and nonsensical.

The solution currently being explored in top research labs is “Verifiable Synthetic Data.” This involves using a highly capable “teacher” model to generate complex problem sets and a “critic” model to verify the accuracy of the answers. By only feeding the “student” model verified, logically sound data, researchers can continue the scaling trajectory. They can do this without needing fresh human input.

World Models: AI That Understands Physics

One of the loudest criticisms of current AI is that it lacks “common sense.” A model might be able to write a Shakespearean sonnet but won’t understand that if you flip a glass of water, the floor gets wet. This is because LLMs are “disembodied”—they know words, but not the world.

Bridging the Gap Between Text and Reality

AI research is now heavily focused on “World Models.” These are systems designed to predict the physical consequences of actions within a simulated environment. By training AI on video data and physics simulations, researchers are teaching machines the underlying laws of our reality.

This is the bridge to advanced robotics. Once an AI understands gravity, friction, and spatial dimensions, it can move from a digital chatbox to a physical agent. Then, it becomes capable of navigating a warehouse or assisting in a laboratory. This transition from “Generative AI” to “Physical AI” is perhaps the most exciting frontier of the decade.

The Ethics of Autonomy: Researching Safety in a Reasoning World

As models become more capable of independent reasoning, the conversation around AI safety has shifted. We are no longer just worried about biased text or “hallucinations.” The new research focus is on “Alignment”—ensuring that an AI’s internal logic matches human values.

Interpretability: Looking Inside the Black Box

One of the most difficult challenges in AI research is that these models are “black boxes.” We see the input and the output, but the trillions of connections in between are nearly impossible for a human to parse.

“Mechanistic Interpretability” is a growing field of research dedicated to reverse-engineering these neural networks. Researchers are essentially performing digital neuroscience, trying to identify which specific “neurons” in a model are responsible for concepts like “honesty” or “deception.” If we can understand how a model reaches a conclusion, we can build guardrails that are baked into the logic. This is much better than just slapping them on as a filter.

Small Language Models (SLMs) and the Decentralization of AI

While the headlines are dominated by massive models requiring billions in hardware, a quieter revolution is happening in “efficiency research.” Not every task requires a god-like intelligence; sometimes, you just need a model that can summarize a document on your phone without an internet connection.

The Power of Distillation

Researchers are finding ways to “distill” the knowledge of giant models into smaller, more efficient packages. Through techniques like quantization and pruning, we are seeing the rise of Small Language Models (SLMs) that rival the performance of yesterday’s giants. These models run on consumer-grade hardware. This democratizes AI, moving the power away from a few massive corporations and into the hands of individual developers and researchers.

Conclusion: The Path Toward AGI

The ultimate goal of AI research remains the pursuit of Artificial General Intelligence (AGI)—a system capable of performing any intellectual task a human can. We are not there yet, but the shift from “probabilistic guessing” to “structured reasoning” suggests we have finally found the right path.

As we move forward, the most successful AI systems won’t be the ones with the most parameters, but the ones with the most robust logic. The future of research lies in the marriage of language, physical understanding, and ethical self-correction. For researchers and enthusiasts alike, the “gold rush” of simple generation is over. The era of deep, meaningful machine intelligence has begun.